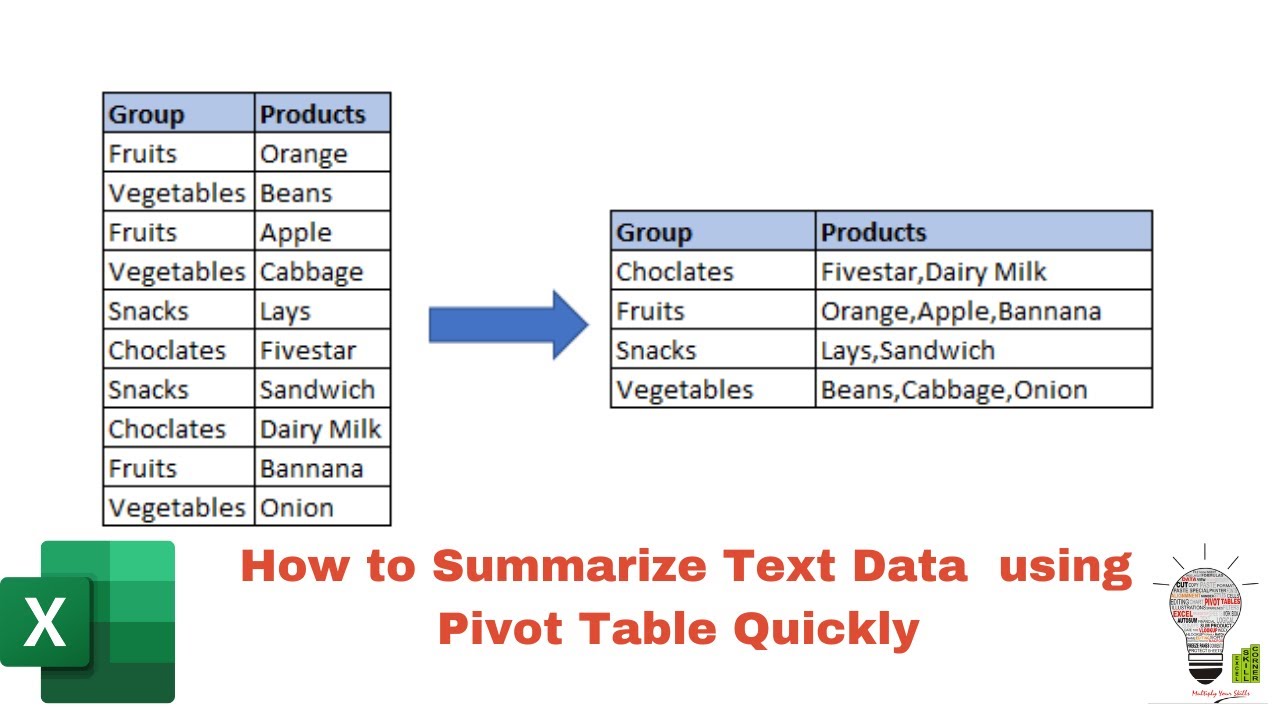

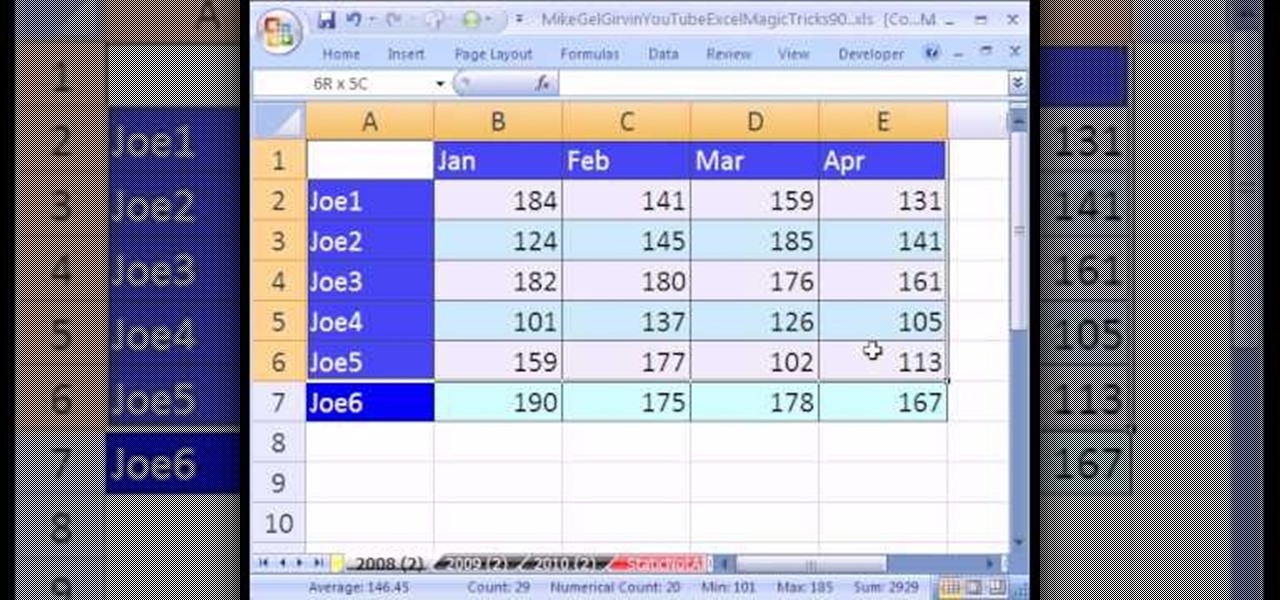

To better understand this problem, let’s consider an example. It does not work well for documents that contain mostly tabular data, such as spreadsheets. This process works well for documents that contain mostly text. Finally, an LLM can be used to query the vectorstore to answer questions or summarize the content of the document. A second library, in this case langchain, will then “chunk” the text elements into one or more documents that are then stored, usually in a vectorstore such as Chroma. The process begins with using an ETL tool set like unstructured, which identifies the document type, extracts content as text, cleans the text, and returns one or more text elements. A typical “quickstart” workflow for these purposes is as follows: Figure 1 - Typical AI-oriented ETL Workflow (source: ). Given that most documents in question mostly contain text, which Large Language Models (“LLMs”) are well suited for, many of the ETL tools were built for this case. However, document Extraction, Transformation, and Loading (“ETL”) activities are becoming more generative AI-oriented to facilitate activities like document summarization and Retrieval-Augmented Generation (“RAG”). Assuming the user has a good idea of what is contained in the source files, SQL queries or the eparse CLI can be used to retrieve specific data. To this end, we were fairly successful – eparse can extract sub-tabular information using a rules-based search algorithm and store labeled cells as rows in a database.

When I first sat down to write eparse, the objective was to create a library that could crawl and parse a large set of Excel files and extract information in context into storage for later consumption.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed